Big Data and AI offer enormous potential in data processing outside the IT sector as well. This is no longer a secret. However, outdated structures often still exist when it comes to strategy, project, action and task management. But how do you solve this problem? Is it perhaps ultimately all just a question of the right data?

Following the example of Toyota’s Lean Production System, a continuous improvement of the production process has been strived for in recent decades. Consequently, many companies are asking themselves how they can also make other internal or external company processes leaner, faster and more cost-effective. The focus of these business processes is usually not, as in the classic production process, the flow of materials, but the exchange of documents and information.

In the so-called “Internet of Everything“, in addition to people and things (“Internet of Things”), processes (delivering the right information at the right time in the right format to the right addressees) and data (as helpful support for decision-making) play a particularly important role. Thus, the data available in the company moves into the centre of attention and forms the elementary basis for the optimization of processes (process mining) and the successful management of a company in general.

The evaluation and optimization of complex, extensively networked data and process flows can hardly be managed without artificial intelligence (AI). Thus, it has become inevitable to deal with AI in digital transformation. AI technologies enable the intelligent acquisition and processing of raw data, powerful pattern recognition and the derivation of clear recommendations for action.

The five "Vs" of Big Data

Powerful AI systems and all applications based on them stand and fall with the availability of a comprehensive, high-quality data basis. The so-called “five Vs” of Big Data should be taken into account here: Volume, Velocity, Variety, Veracity and Value. These can be found comprehensibly explained, for example, in the book “Künstliche Intelligenz verstehen” by Ralf T. Kreutzer and Marie Sirrenberg.

Volume describes the amount of data available. The width and depth of the available data affect this volume.

Due to, for example, the increasing use of sensors and the networking of more and more objects ever more extensive data streams are generated. According to Statista 2017, the annual volume of data produced worldwide will increase tenfold by 2025 (to 163 zettabytes).

Velocity describes the speed at which data sets are either created, updated, analyzed and/or deleted. Today, many changes can be recorded, documented and, if necessary, evaluated in real time.

On the one hand, Variety means the multitude of internal and external data sources that have to be processed – often simultaneously – in the course of AI applications, for example. On the other hand, Variety also refers to the multitude of different data formats (such as structured, semi-structured and non-structured text files as well as photos and videos) that need to be evaluated.

Veracity refers to the quality of available data and data sources. In comparison to the downstream criterion Value, Veracity is not concerned with the relevance of the data, but with the formal information content in general. The quality of the data in Veracity aims at the dimensions correctness (freedom from errors), completeness (coverage of all relevant fields), consistency (freedom from contradictions) and topicality (validity of the data).

Finally, Value means the (added) value or usability and thus the relevance of the data with regard to a specific application.

Using Big Data for strategy, project and action management

It is not surprising that global players such as Google, Microsoft and Apple have long since recognized the value of data and are pioneers in the field of Big Data and AI. Nevertheless, the new data processing technologies also offer enormous potential for companies of other sizes and outside the IT industry.

However, these companies often still have outdated structures in terms of strategy, project, action and task management. Progress is documented in various Excel lists, tasks are sent via e-mail and Word documents exist in various versions on local computers. The best strategy to address this problem and benefit from the valuable data within a company is the use of software in the form of an intelligent database system.

In addition to the “five Vs”, there are three central aspects to consider when selecting such a suitable system: the type of data collection, the consideration of data quality, integrity & security as well as the realization of data processing & visualization.

What relevant data is already available internally? Which can be procured externally? What data gaps are becoming apparent – and how can they be closed? How can continuous data acquisition be ensured?

- Central database system

- Accessible everywhere (cloud-based)

- User-friendly interface (intuitive to use)

Data Quality (Value): The data stored in your database meets the standards and requirements of the company. The database system applies a set of rules to a specific or complete dataset and stores it in the target database.

- Predefined fields: Field format (number field, text field, user-defined field,….)

- Categories with predefined selection options

- Illustration of the entire organizational structure and cross-sectional topic

Data Integrity (Veracity): Data integrity is concerned with the correctness and completeness of the data in the database. It uses various rules and processes to control how data is entered, stored, and shared, among other things.

- Mandatory fields

- Roles and rights concept

- Review function

- GDPR conformity

Data Security: While data integrity is about keeping data accurate and usable throughout its life cycle, data security aims to protect data against external attacks (as well as internal breaches). Data security includes the totality of all measures that ensure the safety of the data.

- Password policy

- Encryption of the data

- Secure storage and recovery

- Updates and bug-fixing

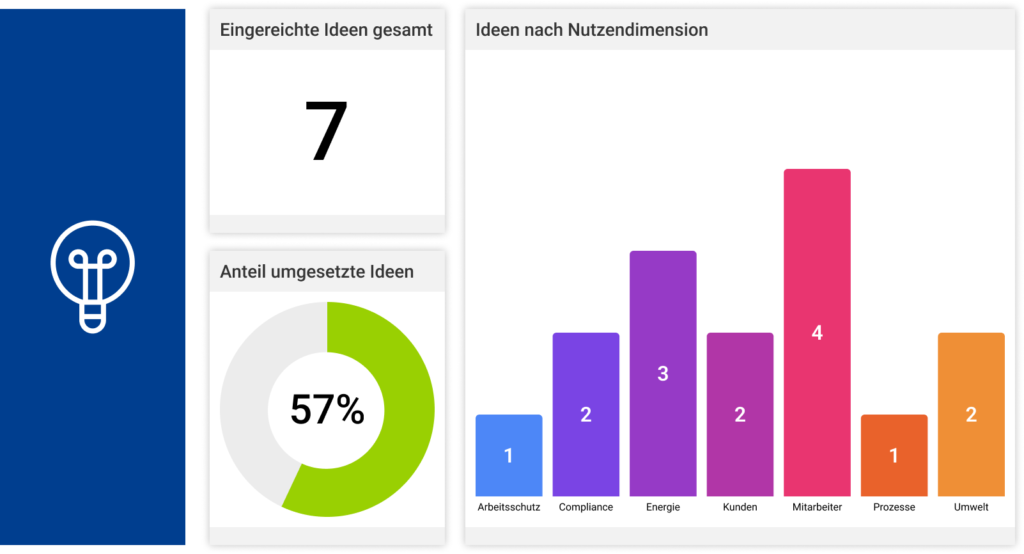

In order to create tangible added value, the available data must be processed and visualized. Ideally, individual tasks or measures as well as entire projects or strategies can be tracked with pinpoint accuracy and intelligent, individually configurable reports can be generated at the push of a button. Employees and management are thus constantly informed about the current status of all tasks and projects to be completed and have a quick overview at all levels thanks to integrated reporting functions: see, understand, act, achieve goals.

- Data cube / OLAP cube

- Dashboard

- Reporting in real time

Intelligent control of business processes in times of digitalization - is it all just a question of the right data?

Yes, but not only. To enable simple data collection, taking into account data quality, integrity and security, software support in the form of an intelligent, central database system is required. With the help of such a system, the information (data cube) can be analyzed in all conceivable dimensions and evaluated in real time. This makes it possible to optimize and streamline processes (process mining), create transparency, prevent redundant data storage and ultimately significantly reduce internal company costs. The solutions of MSO exceed all requirements for intelligent, software-supported control of business processes and use state-of-the-art technology (Big Data, Data Cube, AI, etc.).